Initialize and configure the AI Content Assistant via Essentials

This guide walks you through the process of setting up and configuring the BrXM AI Content Assistant using the Essentials application.

Configuring the Content Assistant via Essentials results in:

-

Addition of new dependencies in your cms-dependencies pom file.

-

Addition of JCR configuration under /hippo:configuration/hippo:modules/ai-service/hipposys:moduleconfig

Initialize and configure via Essentials

You can initialize and configure the AI Content Assistant with the Essentials application. To do so:

-

Go to Essentials.

-

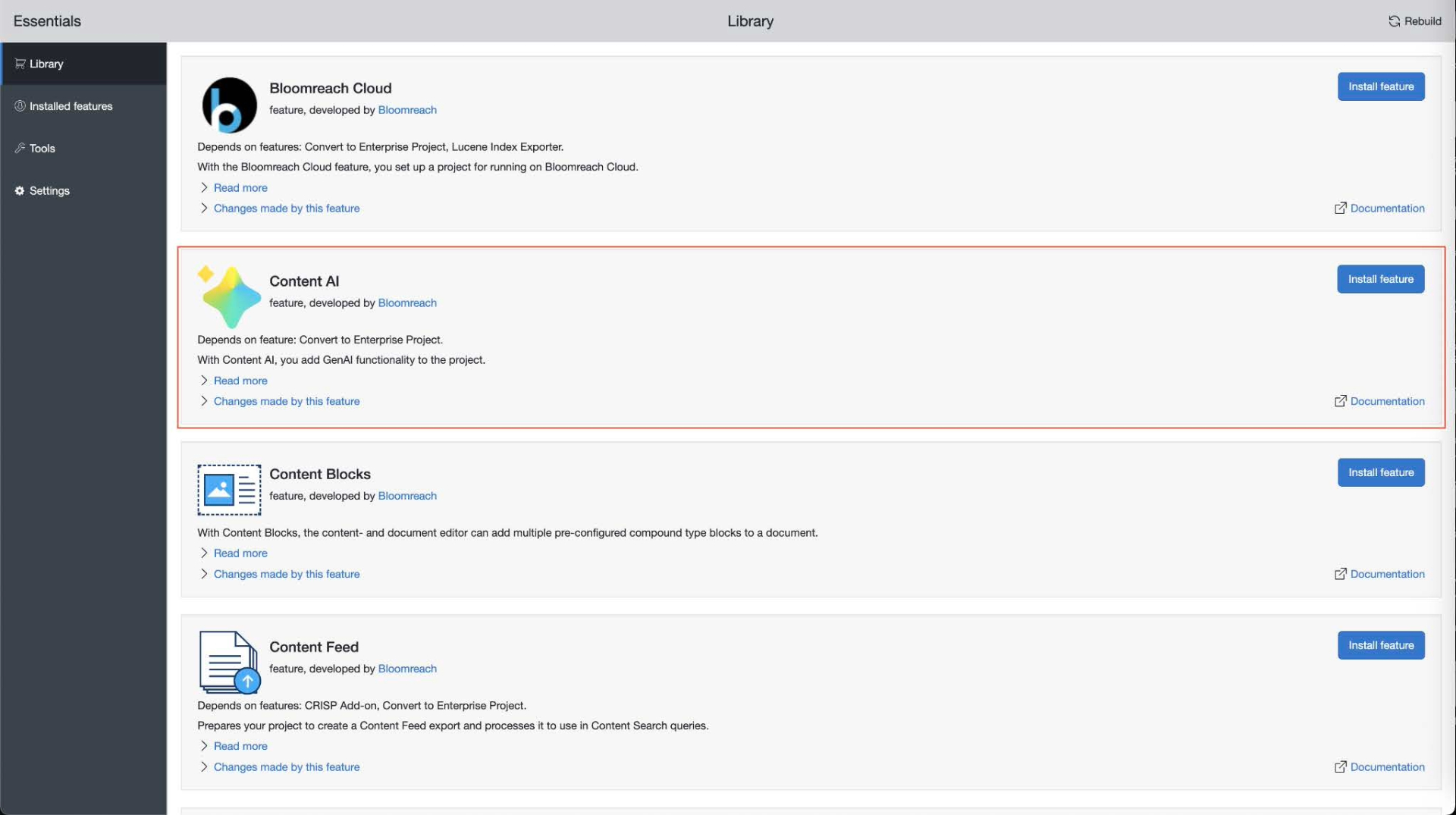

Go to Library - Make sure Enterprise features are enabled.

-

Look for Content AI and click Install feature.

-

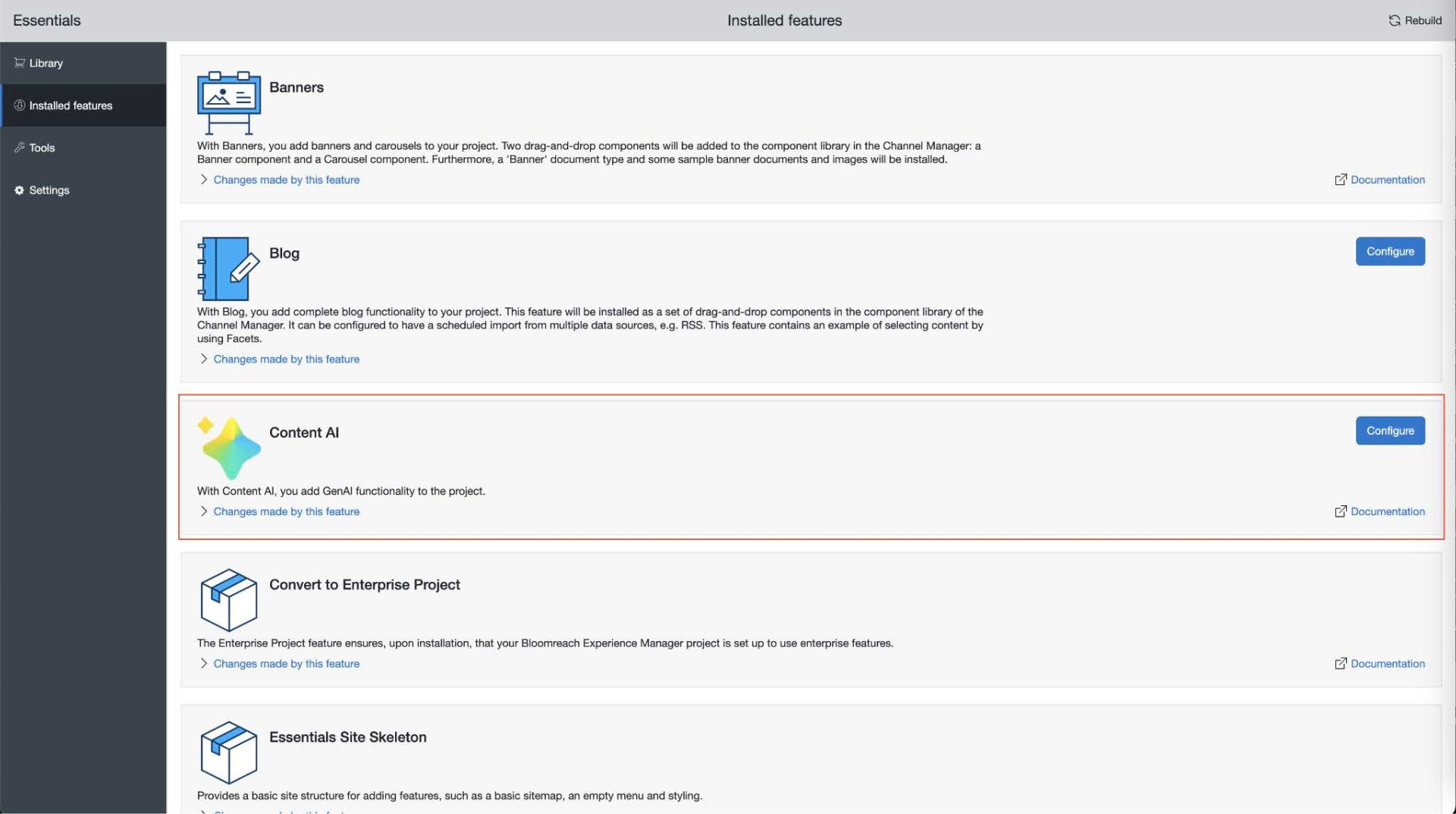

Once your project has restarted, go to Installed features.

-

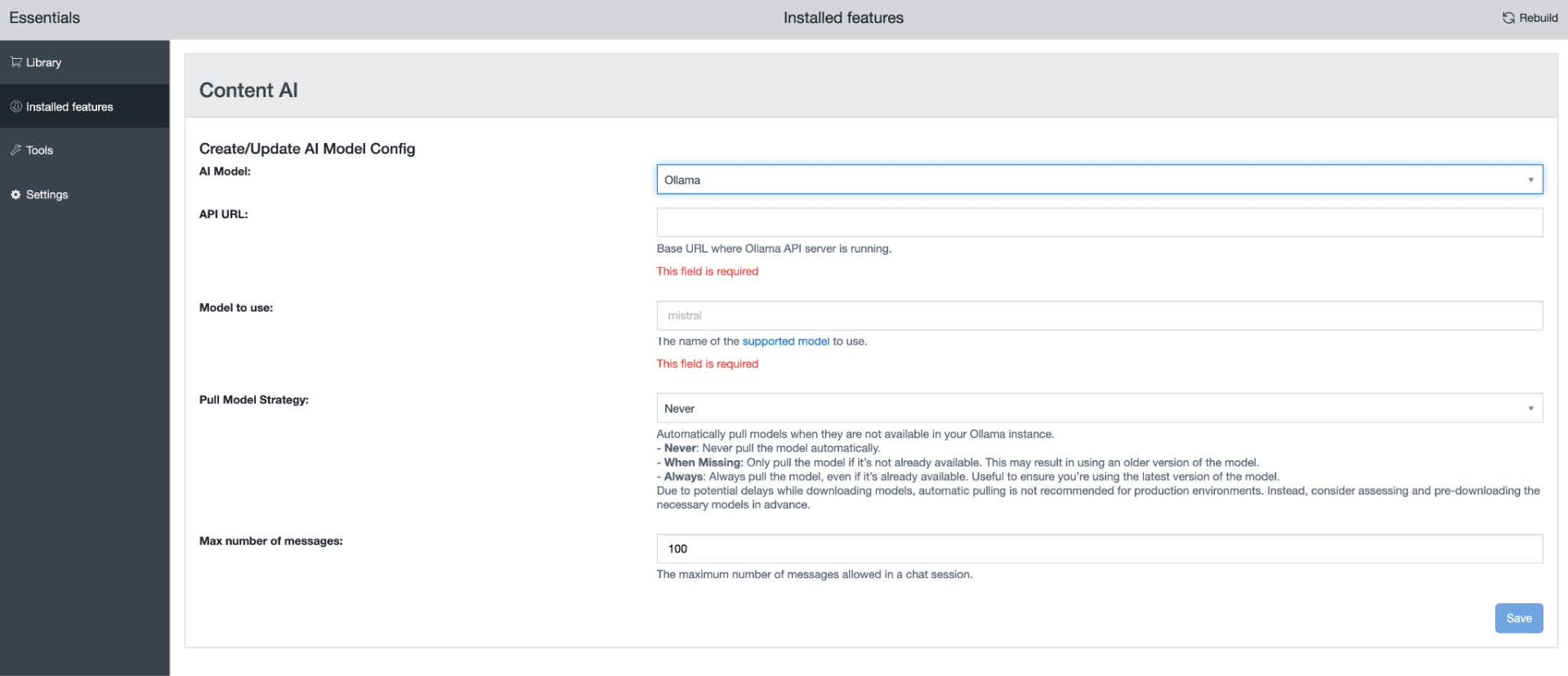

Find Content AI and click Configure.

-

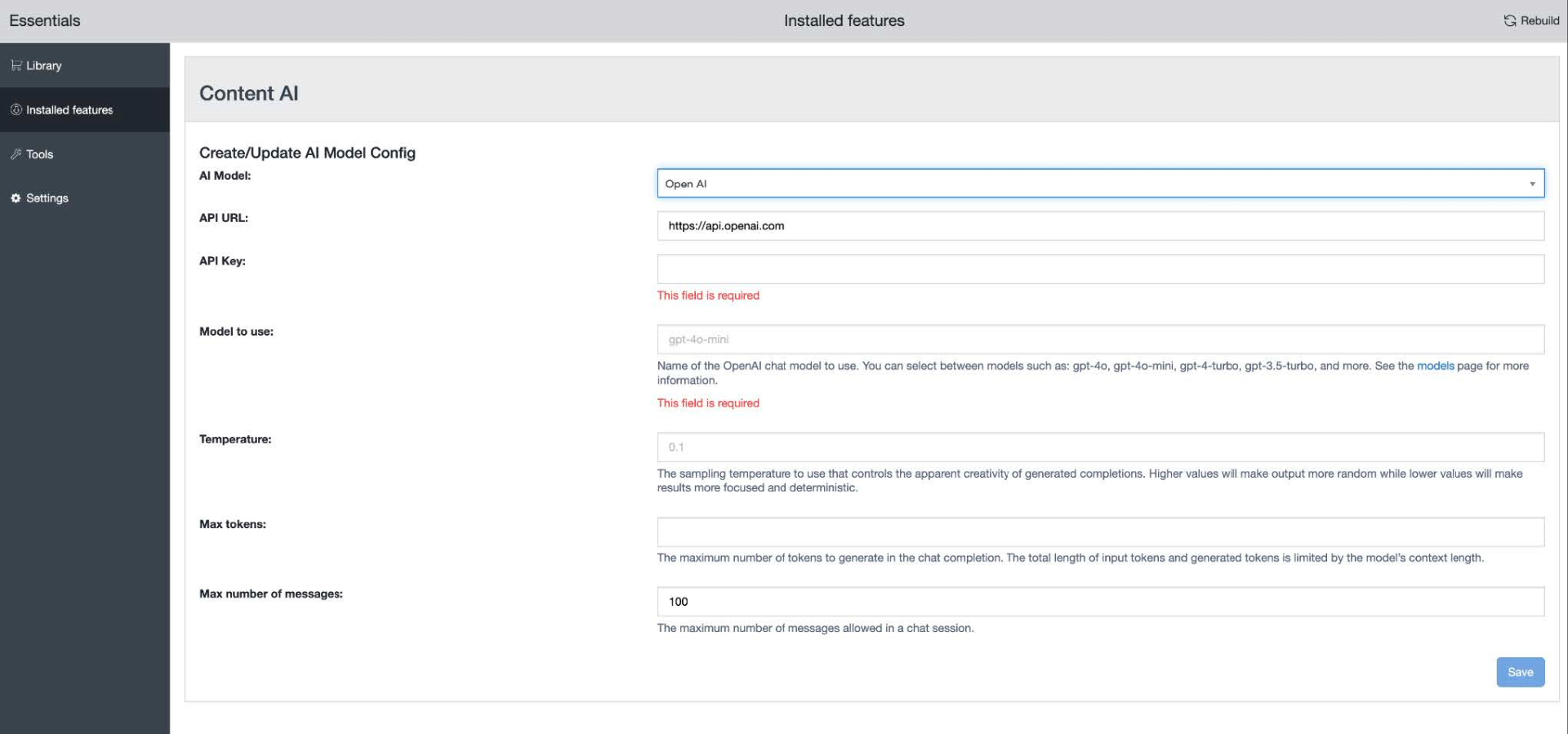

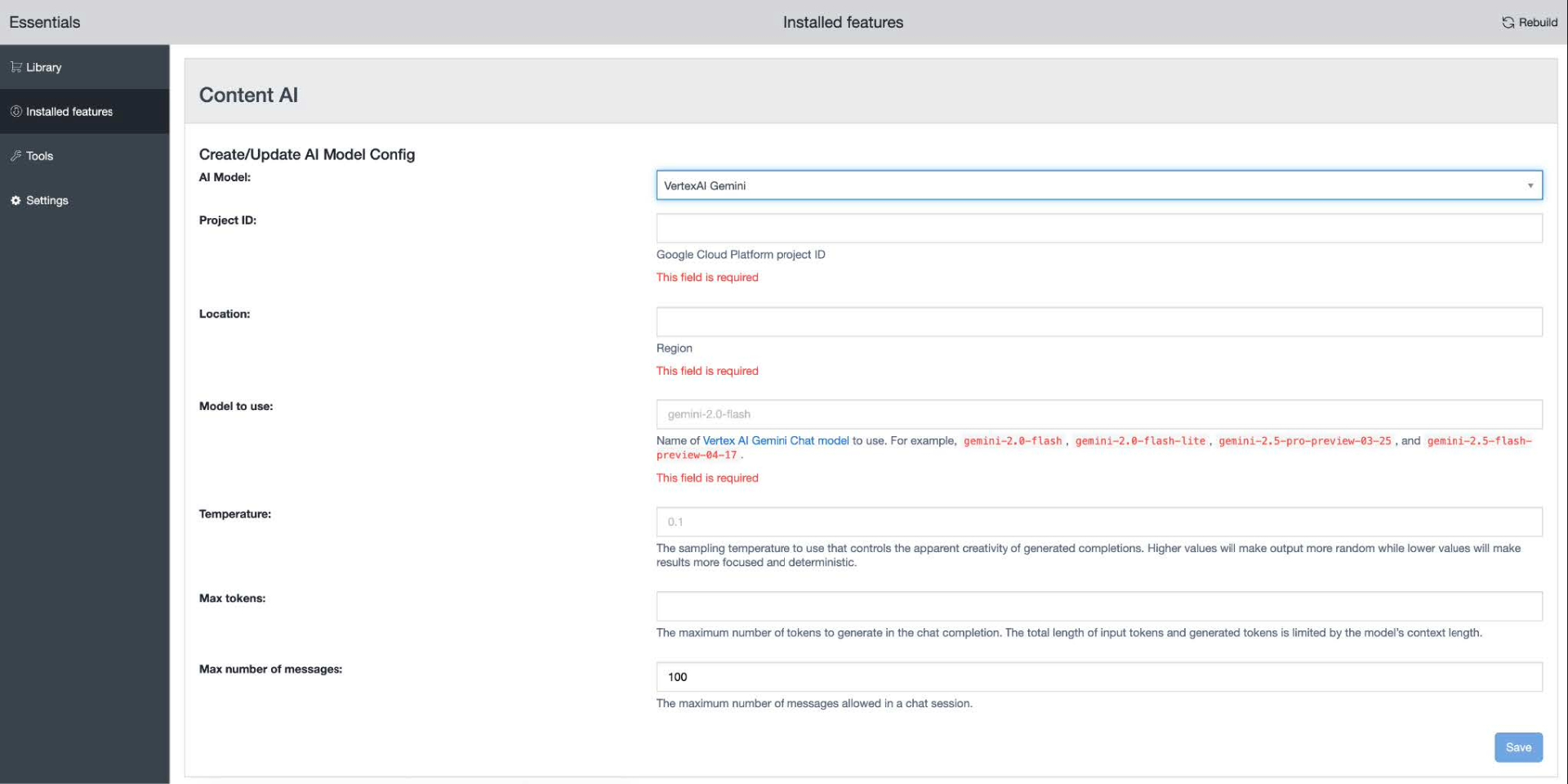

Choose the desired AI Model from the available options of supported providers.

-

Configure the other details such as API URL (endpoint), API key, and so on. Each provider has different configuration options (see Configuration options section below).

-

Once you’re done, click Save.

-

Lastly, rebuild and restart your project again.

Configuration options

Each model provider can have different settings to configure, like:

-

API key/project ID: Enter your API key or project ID, depending on your model provider.

-

Model to use: Specify the exact model name and version to use in the AI Assistant. This allows you to choose the best performing model for a particular type of tasks.

-

Temperature: Set the temperature of the model. That controls the creativity, depth and randomness of the ai responses.

-

Max tokens: Specify the maximum number of tokens that can be used by a conversation. This helps you keep your token usage in check, so it doesn't exceed your allowed limit with your AI provider.

-

Max messages: Limit the maximum number of messages allowed in a single conversation. The user will not be allowed to send more messages once they have exhausted the limit, thereby keeping token usage in check.

- OpenAI's completions-path, add property spring.ai.openai.chat.completions-path

- LiteLLM's completions-path, add property spring.ai.litellm.chat.completions-path

- Maximum allowed size for a referenced pdf: brxm.ai.chat.pdf.max-size-bytes

Please see Initialize and configure via Properties for more property names and provide them either via your jcr configuration or via properties files.

The Vector Store and Ingestion process also cannot currently be configured via Essentials. See Initialize and configure the Vector Store and Ingestion for the property names and provide them either via your jcr configuration or via properties files.

Maintenance Scripts

The AI module installs tooling for maintenance of your Vector Store, in the form of Groovy scripts. See more details in Maintenance Groovy Scripts.