Control Hippo CMS by voice using the Google Assistant

Luís Pedro Lemos

2018-04-30

Do you remember using Siri for the first time? I know I do. When Apple launched its voice assistant in 2010 it felt so futuristic yet so out of place to talk to a phone.

Voice assistants become more popular by the day. After Amazon launched Alexa, Google soon followed with its Home series. And now even Apple jumped on board of the voice train.

After the Christmas Holidays, the Bloomreach office was flooded by Google Home Mini’s. You can control all kind of things by voice nowadays so why not a content management system (CMS)? The idea was born to control Bloomreach Experience respectively Hippo CMS by voice.

How cool would it be to use a voice assistant as a dictation machine for a CMS?

Just like in Mad Men — “Siri, get me Roger on the phone, will you?"

Don Draper, top ad man of a Madison Avenue advertising firm dictates to the secretary directly, who uses crazy fast writing skills to capture every word. Afterwards, the secretary would transform Don’s poor attempts at communication into a polished, clear document.

We don't have dictation machines — and we definitely don't have secretaries. But we have voice assistants.

During a project on Friday, some colleagues & I took a stab at integrating Bloomreach Experience with Google’s voice assistant to create a blog post and dictate the content just like Don Draper in Mad Men.

It’s by all means not production-ready and is just meant as a fun project to try out the capabilities of voice assistants. It’s just a “Hello World”.

So let’s dive in.

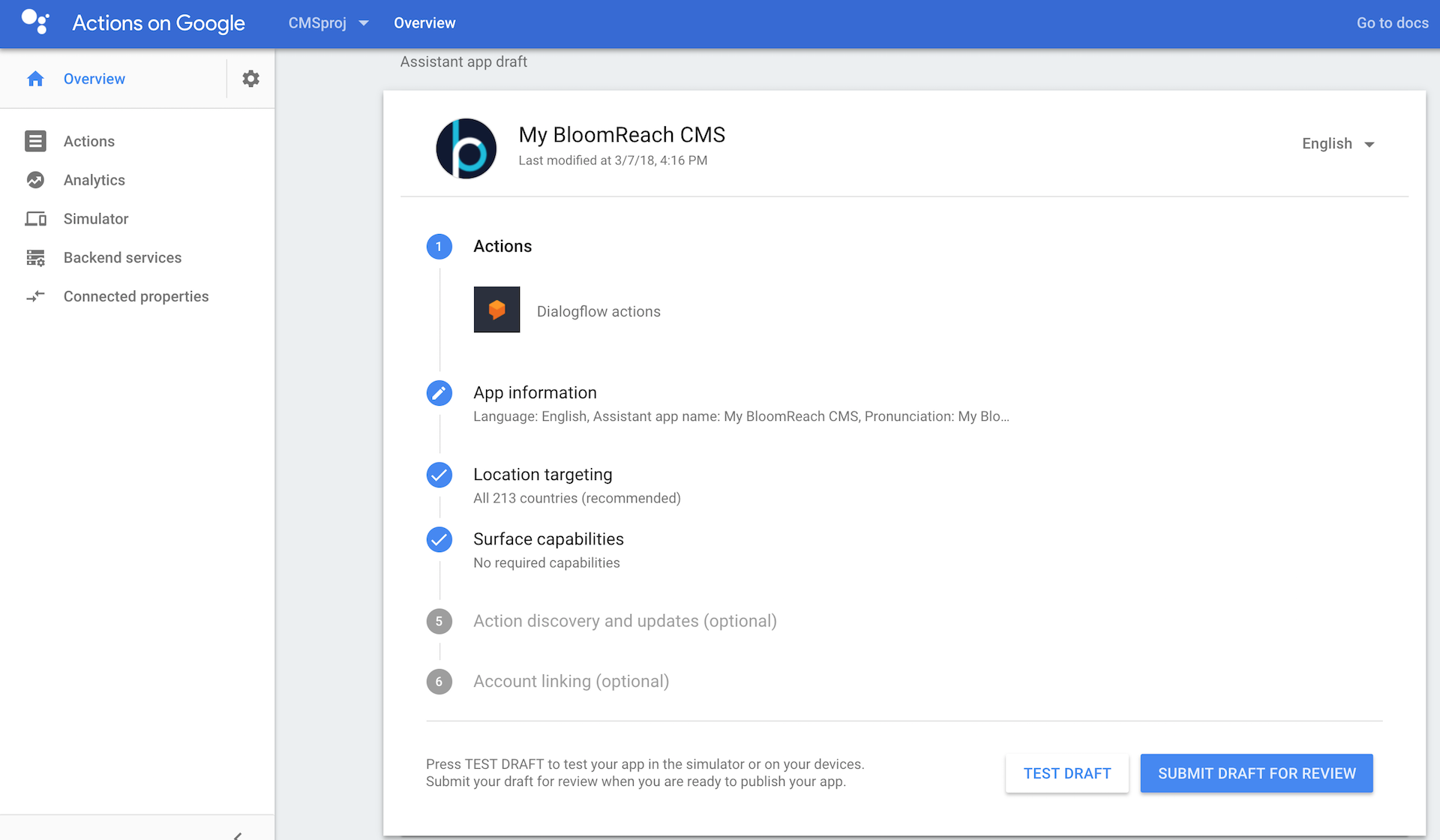

1. Create an “Actions on Google” account

The first thing you need to do is to create a project in “Actions on Google”. “Actions on Google” is a platform that allows you to extend the Google Assistant with your own applications.

2. Set up a new project in “Actions on Google”

To get started, set up a new project in “Actions on Google”. I called mine “My Bloomreach CMS”. In this project, I choose Dialogflow as the first “Action”.

Dialogflow

“Dialogflow is an end-to-end development suite for building conversational interfaces for websites, mobile applications, popular messaging platforms, and IoT devices.”

Dialogflow consists of several steps like “Invocation”, “Intent”, “User Says”, “Entities”, “Fulfillment Request”, “Response” and the “Context”.

No worries, you don’t have to remember all of them. I will describe each one in the following steps.

3. Create Dialogflow Invocations

In order to start a conversation, the user needs to invoke the application. Dialogflow always starts with an invocation. This basically means that you want to start talking to your app. In my case its “Hello Google, start “My Bloomreach CMS”. It’s tied to the name of your application.

Once you’ve invoked your application, you are in your own environment. Inside your application you have intents.

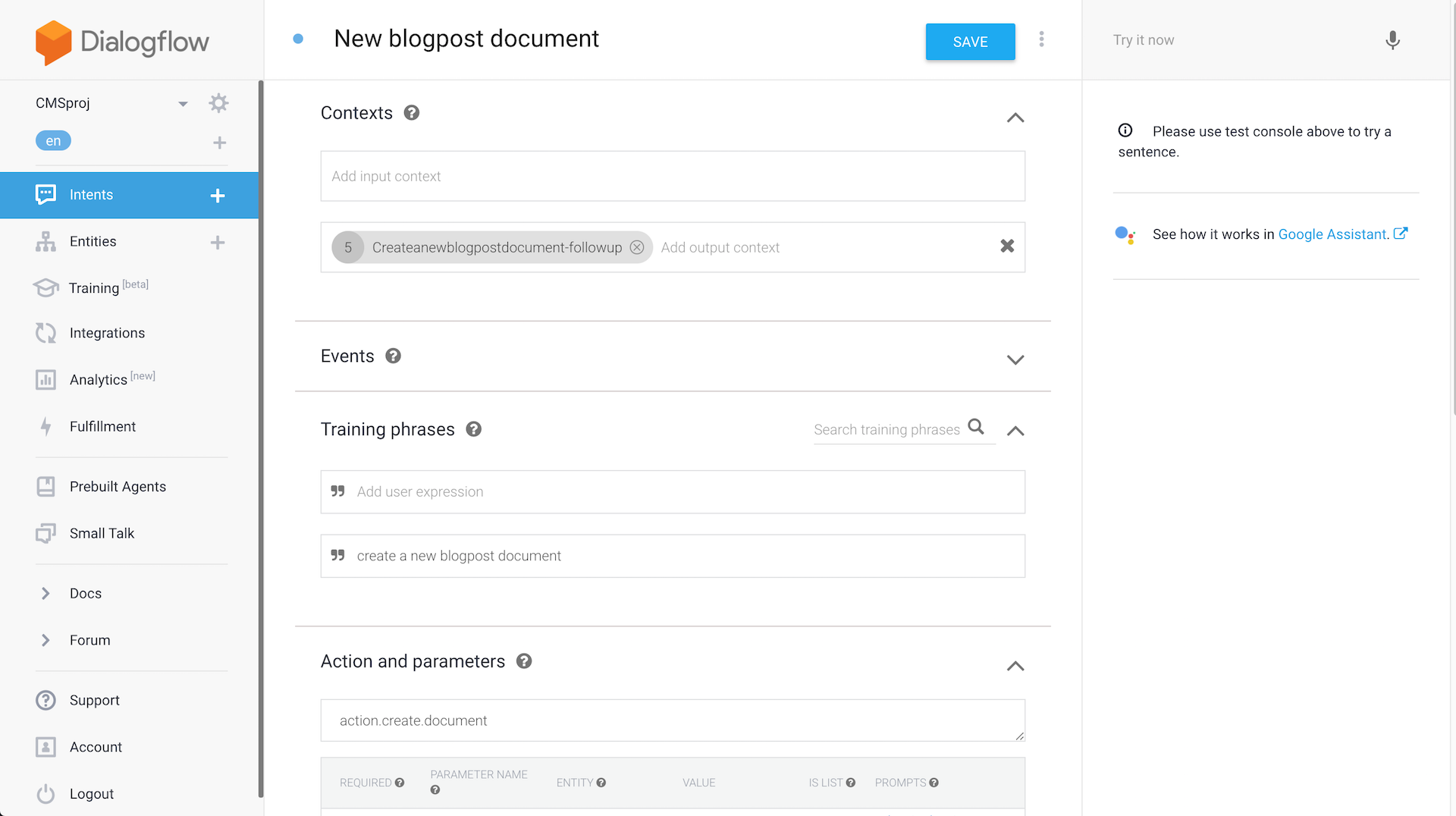

4. Determine actions and intents

In Dialogflow, an intent houses elements and logic to parse information from the user and answer their requests. So you have to define what to you want to do. For “My Bloomreach CMS” project, I created intents such as “Create a document” or “What are the latest news?” etc.

5. Configure “user says”

After creating your intents you can add “User Says Training Phrases. “For Google to understand the question, it needs examples of how the same question can be asked in different ways. Developers add these permutations to the Training Phrases section of the intent. The more variations added to the intent, the better your app will comprehend the user.” It’s basically adding different ways of saying the same thing. You can say “Create a document”,”Create one document” or “Create a blog post document”. They all mean the same thing.

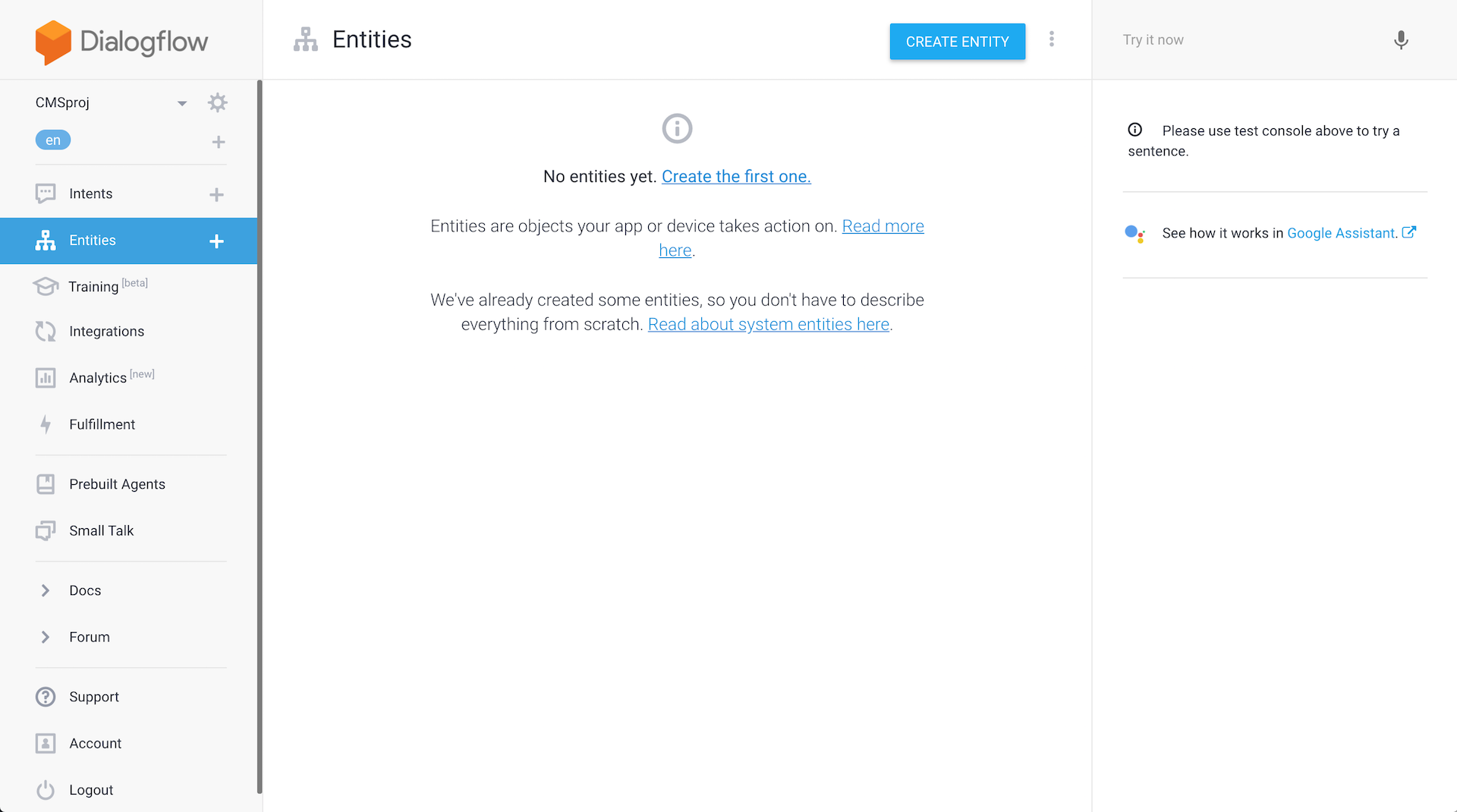

6. Define system entities

Next up are system entities. “The Dialogflow agent needs to know what information is useful for answering the user's request. These pieces of data are called entities. Entities like time, date, and numbers are covered by system entities. Other entities, like weather conditions or seasonal clothing, need to be defined by the developer so they can be recognized as an important part of the question.”

I use the default system entities for this simple project.

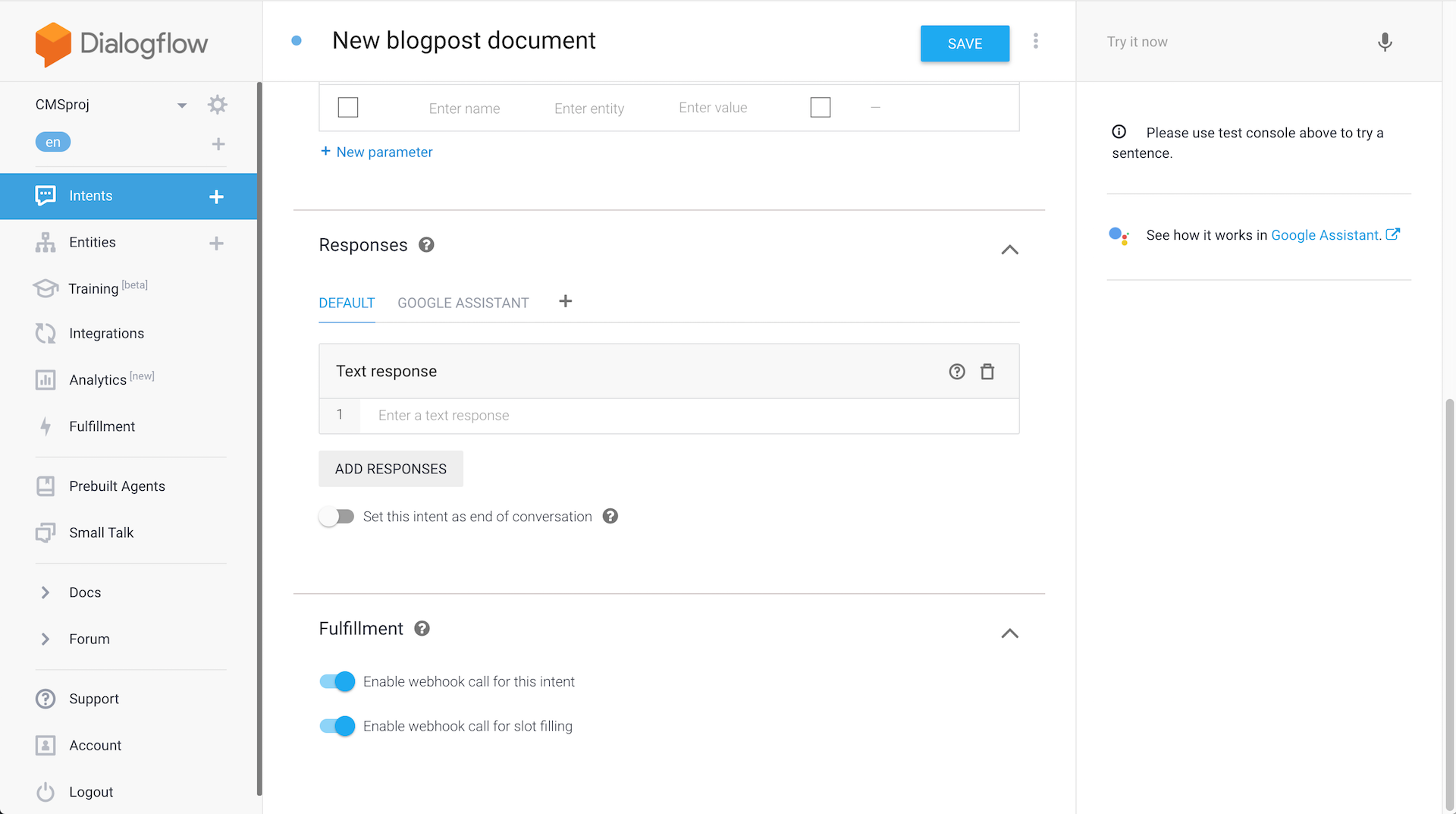

7. Create a fulfilment request

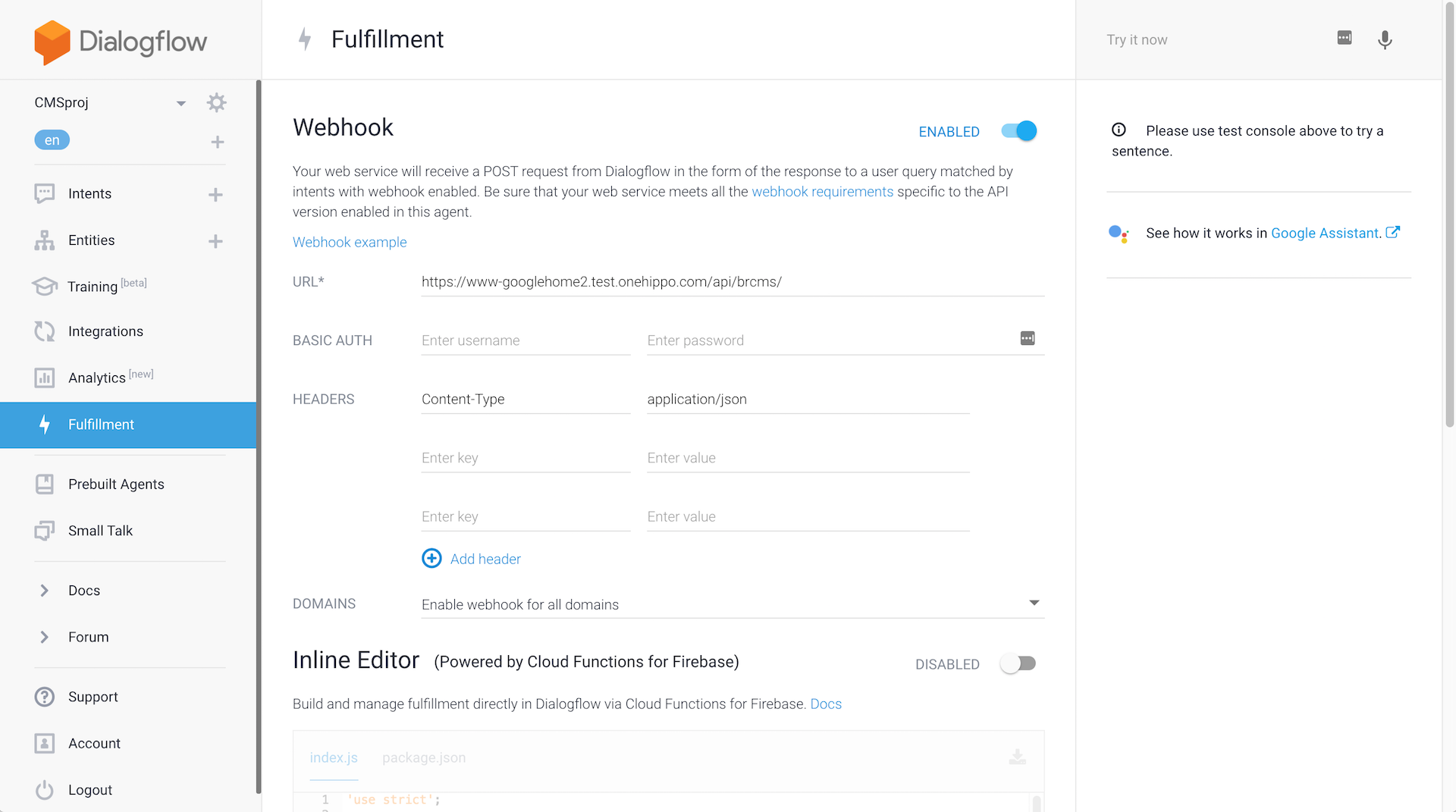

I created a webhook to the CMS. “Setting up a webhook allows you to pass information from a matched intent into a web service and get a result from it.” The CMS does something and then Google will retrieve a response. Ideally, the response will have something for the Google Assistant to say.

Hippo CMS

This is where it gets interesting. Let’s take a look at the CMS.

I created a simple project from the Hippo Maven archetype, deployed on the Bloomreach Cloud.

1. Integration

I created a REST resource. It is a JAX-RS REST API. In the Google Site, I created a webhook as explained above. It is as simple as pointing to the URL that has the endpoint/rest resource.

As an example, I could ask how many blog posts exist and the JSON request would look like this:

{

"responseId": "a4c6f0d1-048d-41cd-8b17-e87f40aecd97",

"queryResult": {

"queryText": "how many blogposts exist?",

"parameters": {},

"allRequiredParamsPresent": true,

"fulfillmentMessages": [{

"text": {

"text": [""]

}

}],

"intent": {

"name": "projects/cmsproj-87617/agent/intents/4f496127-57bd-432d-a797-12a5c452c94b",

"displayName": "how many blogposts exist?"

},

"intentDetectionConfidence": 1.0,

"diagnosticInfo": {

},

"languageCode": "en"

},

"originalDetectIntentRequest": {

"payload": {

}

},

"session": "projects/cmsproj-87617/agent/sessions/91c614d8-0b0e-49e5-aa9a-fef4fa10498f"

}

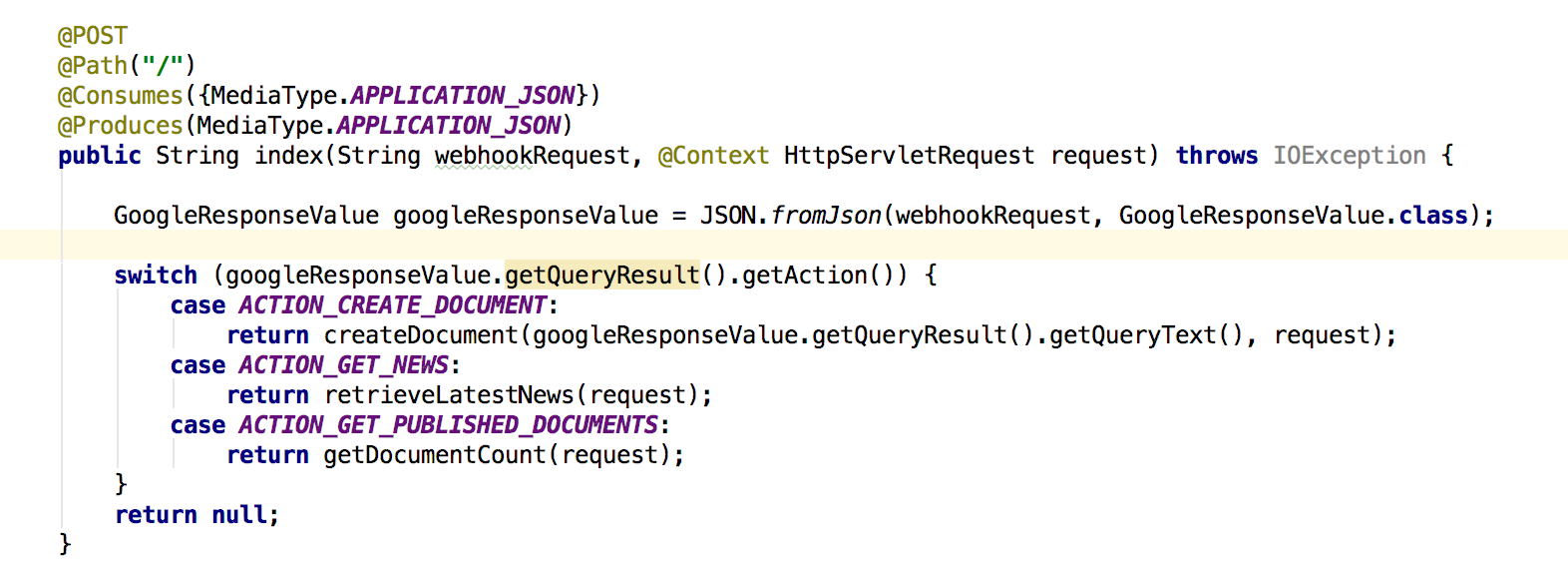

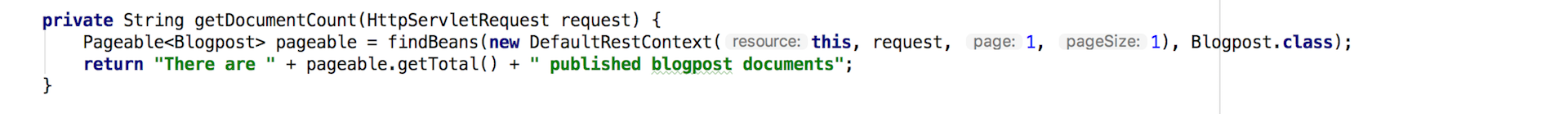

In the CMS, the request will be handled in the following way:

This is the entry point for the webhook. According to what was said to the Google Assistant, the CMS is going to do something. On the webhookRequest parameter we'll have Google's input as JSON which we will then convert to our own GoogleResponseValue:

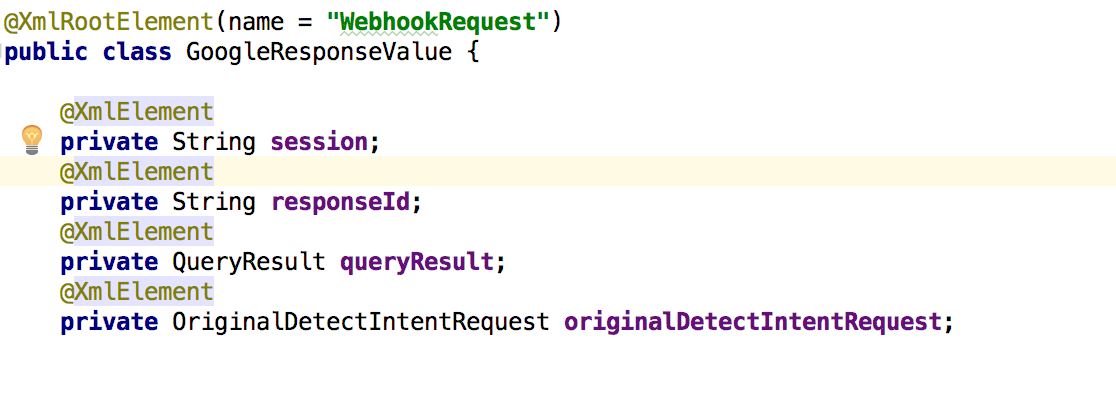

This is the wrapper for the data that we receive:

And finally, the code below defines the answer for Google Assistant:

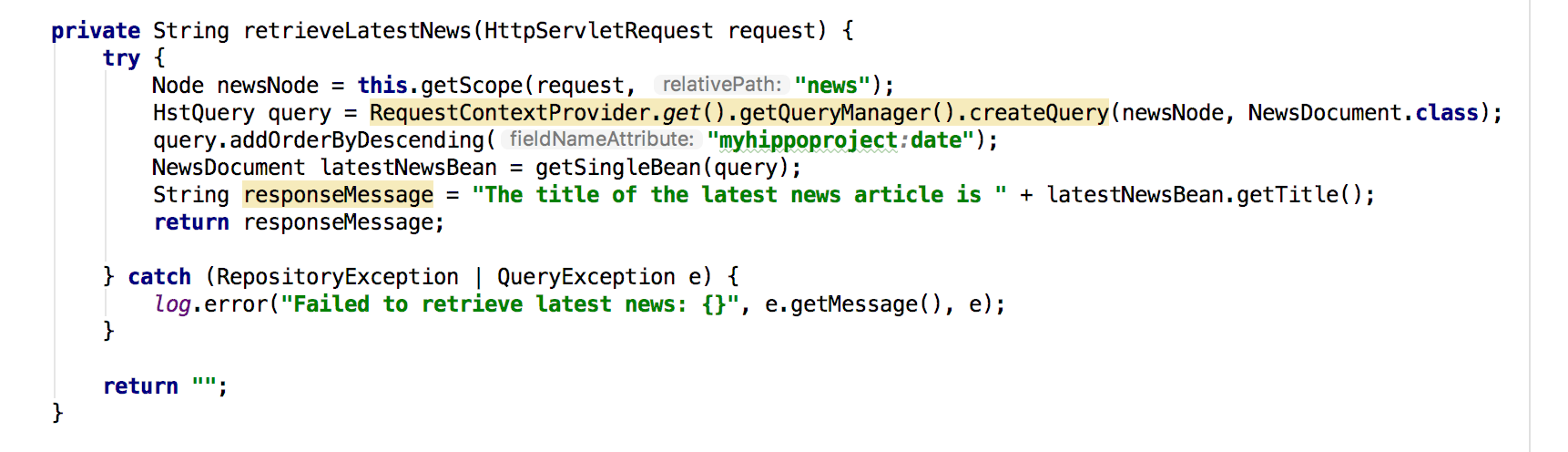

Instead of retrieving the number of existing blog posts, I can also ask for the latest news:

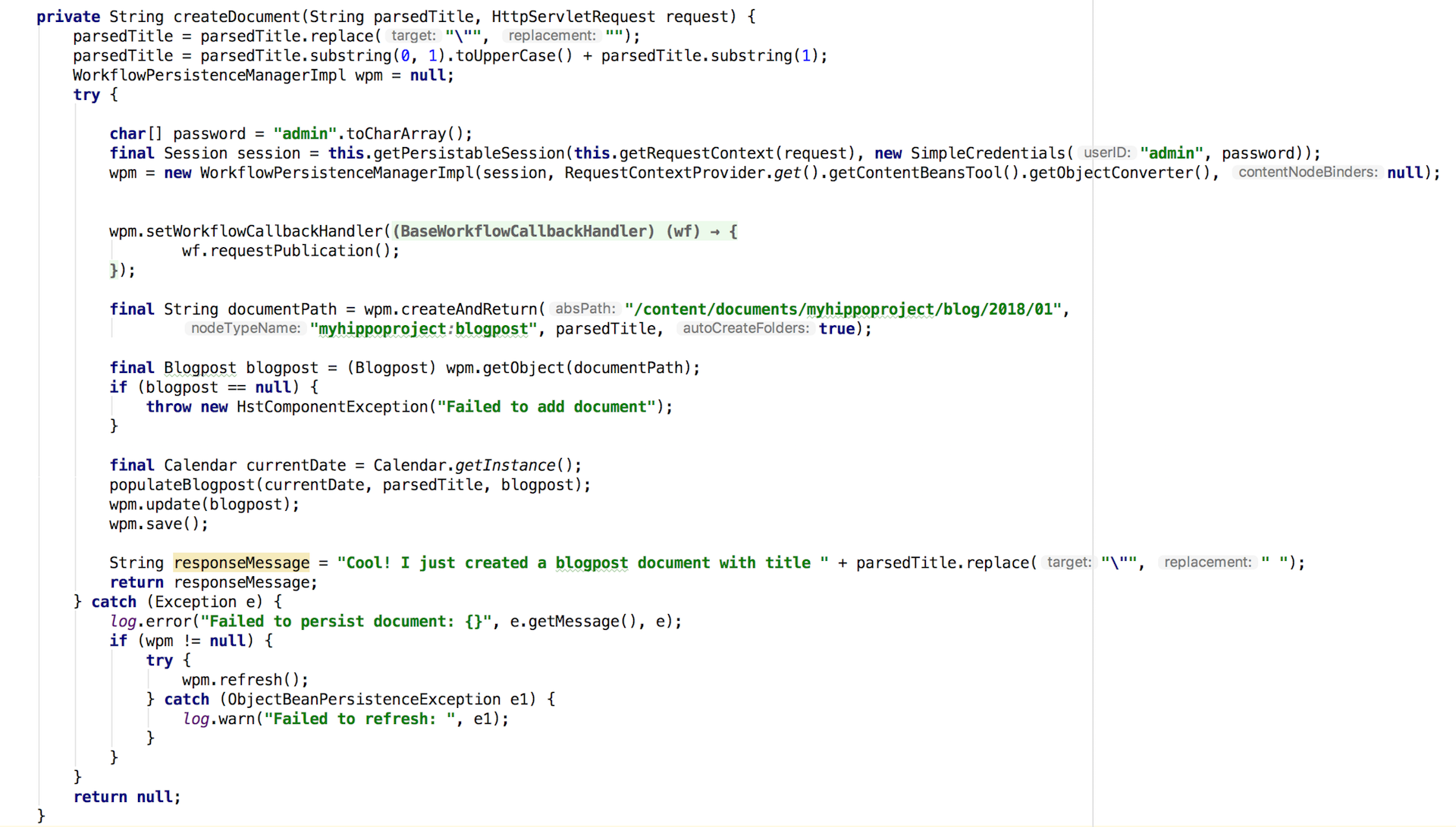

Or I can create a new document:

So this is how you can integrate a content management system with a voice assistant.

You can try out the integration yourself and take it for a spin. The whole code is available on the Bloomreach GitHub Account.

We’ve come a long way since Siri’s first appearance in 2010. People are getting more comfortable interacting with voice assistants and they’re improving significantly over time.

Just like Don Draper, in good old Mad Men style, you can now put up your feed and dictate your next blog post to your CMS.

Did you enjoy reading about how to control a CMS by voice? Sign up for the Bloomreach Developer Newsletter and stay in the know when new and interesting articles are published.